What Has AI to Do with Christ-Centered Education? A Framework for Faculty Engagement

[An alternative title might have been “Artificial Intelligence—to Engage or Not to Engage? This Is Not (and Never Has Been) the Question.” The force of this alternative title is that critical engagement is as viable an approach to AI for a faculty member of a Christ-centered institution as prohibition. The only thoughtful Christian response is not resistance to AI. However, this essay is not an appeal to adopt this position of critical engagement if one has thoughtfully arrived at another posture. I value diversity of opinions on this topic so long as one has arrived at their position informedly. Instead, it is an explanation for this position for those who may be inclined to this or a similar posture.]

Introduction

It seems like an obvious claim to state that the value and application of AI in the educational context is not monolithic. The applicability of AI to computer science is distinct from English composition, and both are worlds apart from biblical studies. Likewise, approaches to AI will likely not be equivalent in a state university and a Christ-centered institution. The current essay has some unapologetic biases along these lines. I am interested in addressing two primary populations—those who operate in a Christ-centered or theological education context (i.e. those who attempt to bring a Christian worldview to bear on their academic discipline), and those whose fields are in the humanities and social sciences (i.e. those whose disciplines are not STEM).[1] The reason I want to speak to these two groups specifically is that, in my experience, these two audiences are especially prone toward resistant positions regarding AI.[2] To the contrary, I believe that a distinctively Christian position is one of critical engagement with AI (as opposed to prohibition) and that the humanities and similar fields have more benefit to be gained than is often recognized (contrary to the view that AI undermines these fields).[3]

It should be said at the outset that I do not wish to make light of the concerns of faculty members who hold a more resistant or prohibitive posture. In fact, I believe that we will be able to move forward most responsibly if those of this persuasion have an equal voice. Many of those who hold to this position and with whom I have had the opportunity to engage are informed and thoughtful in their approach. For many of them, AI feels more like an encroaching threat than a promising opportunity. Their concerns vary from an erosion of academic integrity, diminished data stewardship and privacy, the disruption of intellectual formation, an underappreciation of distinctively human functions and identity, and giving a backseat to intrinsically spiritual aspects of our stewardship over creation. I agree that these concerns and fears are not unfounded. As Ethan Mollick stated in his introduction to Co-Intelligence: Living and Working with AI, “I believe the cost of getting to know AI—really getting to know AI—is at least three sleepless nights.”[4] However, I will argue below that these concerns are not sufficient reason to advocate for holistic disengagement from AI.

The purpose of this essay is to demonstrate that critical engagement with AI is an acceptable and respectable position for faculty members in a Christian institution. Critical engagement precludes both blind acceptance and adoption as well as absolute exclusion. It requires one to approach each use of AI with an open mind and willingness to consider the advantages and disadvantages, pros and cons, appropriateness and inappropriateness, enhancements and erosions. Critical engagement requires one to actually experiment in the context of their own work in all of its variety—administrative, research and writing, and teaching. It also includes designing one’s courses to foster skill development and an informed opinion on AI among their students by permitting them to also engage in appropriate ways with these emerging tools.

The essay will go about this task by way of three sequential segments. First, eight philosophical principles will be introduced, each of which is intended to provide one logical reason for engaging, rather than resisting AI. These eight principles are foundational for me, and are prerequisite for further consideration of the topic. Second, three practical guidelines will be offered to assist one in maximizing the value and experience with their AI tools. Whereas the first section is conceptual, this section is intended to be concrete, even if general in its scope. Lastly, I will offer a variety of very specific use cases for employing AI tools. Each use will offer a tool of choice, qualifiers, benefits, and some may even offer sample prompts to adapt for one’s specific context.[5] This third section also offers some comments on the recent integration of AI functions in enterprise systems and lists ten holistic benefits that AI may allow if we choose to engage. I have also provided a working theological framework for AI as an appendix for readers. I pray this essay may be useful for maintaining God-honoring instruction in Christ-centered institutions.

Foundational Principles

The following eight axioms represent foundational principles that have shaped my perspective on the role of AI within Christ-centered higher education in general and the humanities in particular. They not only inform my overall posture toward AI engagement but also provide a framework from which I approach the specific and practical ways in which I personally use AI (see below). I recommend these to all readers and encourage their adoption for decision-making on this topic.

AI has Arrived

Artificial Intelligence has arrived, and like Clark Griswold’s socially oblivious cousin, it is not going away whether we like it or not. However, beyond its permanence, I would argue that actual engagement with AI should also be normalized. The “arrival” terminology in the subheading is not mere wordsmithing but derives from the well-known concept of arrival versus adoption technologies.[6]

We all can think of digital technology or services that we have chosen or opted to use (i.e. adopted). Examples might include smart watches, e-readers, social media, whole home automation products, or navigation software. Examples of adoption technologies at an institutional level might include video-conferencing software such as Zoom or Google Meet; a learning management system such as Canvas, Blackboard, or Brightspace; or some sort of digital document and signing tool like Adobe. At an institutional level, the to-be adopted technologies pass through a vetting and procurement processes where they are compared against competitor tools, acquired through formal contractual processes, implemented systematically, and then promoted for adoption and use by the population of the institution for which they are intended (e.g. students, faculty, or staff). These tools and any others go through a process in line with the governing policy. The institutional stakeholders decided that there was a need and then applied a process much like the one outlined above in order to implement the tool. The same concept and process occurs at the personal level when we decide we want to use a certain technology, whether a laptop, a specific fitness application, or media technology in our vehicles. It is simply less defined and more organic in our individual lives.

Arrival technology, however, bypasses all vetting and selection processes, whether formal or intuitive. At the institutional level, it merely shows up one day in your class. For example, no faculty member or institution had to evaluate the presence of mobile devices (phones, tablets, or watches) in their classes and buildings in order to facilitate such use by students, staff, or even faculty. They just showed up—or arrived. In fact, institutions had to create policies and processes to manage or even (attempt to) prohibit their use in inappropriate contexts and manners. Beyond this, they had to develop ways to capitalize on and leverage the arrival of these new technologies for the mission and interests of the institution. In other words, the arrival of this tech triggered the adoption of new processes, operations, and ways of thinking about how we go about our daily lives (e.g. WiFi saturation on campus, personalized way-finding, campus communications, emergency alerts). No school chose to adopt our mobile culture, but every school has tried to adapt to the new paradigm on an appropriate timeline relative to their resources and constituency.

At the societal and personal levels, the introduction and reshaping of our behaviors (and yes even our values) by so-called arrival technology is unavoidable. One does not choose to adopt these technologies, but rather participation in society in a real way requires it. The choice not to use an arrival technology is the choice to de-socialize on some level. For example, one may choose not to own a mobile device with a data plan. However, virtually every significant service requires two-factor authentication, and the most common second factor is one’s phone. Therefore, the decision to forego the adoption of this technology is tethered to a decision to remove one’s self from societal experiences and norms. Other examples of arrival technology at the personal level are the internet, the personal computer, and automobiles (whether privately owned or accessed as public transportation).

Thus, when faculty are deciding whether they should engage with AI in their classrooms, he or she must recognize that this choice is not actually theirs. In reality, they already are engaged by virtue of their profession and the intrusion of this tech into their craft and the lives of their primary constituency—students. Our students are using it. Our vendors are using it. We are using it in other areas of life to some degree of satisfaction. In reality, our only choice, and maybe not even this, is how we do so. If I were to be a bit more snarky, I might say that our only choice is deciding if we want to take a proactive posture or a reactive posture—if we want to have some level of input into how AI is leveraged in our class. The proactive option involves coaching students in ways that guide them toward responsible use for the future. The reactive choice leaves them to their own guises and other influences of unknown origins.

This Is the Worst It Will Ever Be[7]

Until September 2023 (or 2025 for some), ChatGPT and other consumer grade AI bots were limited to data up to but not surpassing September 2021.[8] In mid-2023, the most well-known AI bots did not accept direct file uploads. Not that long ago, one had to engage dedicated platforms such as DALL-E, Stable Diffusion, or Firefly for image generation and could not generate graphics in the context of a text-based chat with an AI bot such as ChatGPT or Claude. Even today one will hear complaints and critiques about high levels of hallucination by AI.[9]

Today none of the most prominent bots are bound by date limits.[10] All allow file uploads of various types and can readily engage with the file. Likewise, all of these bots will generate and adapt an image based on prompt. While hallucination continues to be a primary source of critique, more users seem to understand that different AI models and products are more or less prone to this based on their “tuning,” that each new release resets the expectation, that one may issue a follow up prompt to ask if any of the sources or claims are unsubstantiated (i.e. hallucinations), that they may construct their initial prompt in a more sophisticated way to minimize or mitigate hallucination, and that certain modes are available for some AIs that will slow the processing down and require it to validate information before presenting it to the user.[11] These examples illustrate that AI tools are developing to resolve inadequacies and responding to the demands of the market.

Thus, any critiques of AI tools made today based on functionality or quality may be resolved in the next update and thus do not warrant an absolute rejection or prohibition of AI in academic work or teaching contexts. In other words, current limitations are not grounds for an objection. These limitations may warrant current restrictions. However, our experience is that this practical argument is the foundation for most absolute and principled prohibitions of AI.

Interestingly, critiques like those in the first paragraph above are often rehearsed by the same individuals who may be concerned that AI will undermine student learning or even completely topple our educational system. The idea is that students will simply convert assignment instructions into prompts and mindlessly turn in the result as their own.[12] However, it seems to me that AI cannot simultaneously be so bad that it should be prohibited and so capable that we cannot identify when students are simply turning in its work whole cloth. Either AI is so capable that students can completely bypass the learning process or AI has such limitations that any well-trained instructor should either be able to spot the forgery or should grade such a submission so low that the use of AI is disincentivized.[13]

As we look to the future, we should expect to see continued closing of the gap between our demands for AI and the realities of the delivered product. For example, we are already seeing more AI products targeting and marketed for academic contexts.[14] These tools seem to prioritize the hard sciences and behavioral sciences in their development, but we can expect the incorporation of humanities subject matter to continue to increase. One core weakness of the current status quo is the lack of integration with peer reviewed licensed enterprise databases. However, there are indications that this is now in place or will be soon.[15] This function alone, will be a “game changer” for the use of these academic AI tools.

Lastly, the development of a comprehensive drafting station is well on its way in several of the major tools.[16] In the end, this will include a working canvas, a store of deleted drafts and trimmings, a bibliographic bank, etc. We would venture to speculate that if we could, in a single digital instance, discover, read, and annotate articles that are exclusively drawn from licensed peer reviewed databases; store the desired articles in a single location; apply tags or otherwise group those articles for easy reference; draft our own project with versions autosaved; highlight quotations from the articles, drag them over into our draft as either a direct quotation or paraphrase, with the citation created in the proper formatting; and take notes for spinoff projects or on outstanding questions to address in the current project—if all of this could be done—many of us would adopt and enjoy using such an interface. This is not very far away if it is not already on the market by the time this essay is published.

AI Sets the Floor, Not the Ceiling

This is perhaps the most controversial and easily misunderstood of the principles in this section. I am not saying that AI work will never exceed the quality of that which could be produced by students. I am not saying that AI work is always bad. I am not saying that AI work cannot pass for decent human work.

However, I am saying that AI work is not inherently better than human work. I am saying that an expert human generally and consistently produces better work than AI. I am saying AI establishes a baseline from which humans improve rather than an ideal to which humans aspire.

These claims are consistent with studies that show novice and lower performing employees benefit the most from the introduction of AI into their work contexts.[17] Two implications of this principle are: 1) If AI can achieve mid-level quality on its own, then humans should be able to achieve higher level quality if they leverage AI in the initial phases of the process. In other words, AI can be used to establish a baseline from which to build instead of “starting from scratch.”[18] 2) This is an opportunity to reset grade expectations and combat patterns of grade inflation.[19] Whatever work can be accomplished by simply pasting instructions into an AI bot should be reset to a lower grade, perhaps even an F. Therefore, to achieve higher level grades, one must do more than what AI can do alone.[20] Given that cheating is often motivated by grades and that it increases with access and ease of opportunity, this recalibration of scoring might have the welcome effect of deterring cheating as well.

AI Can Do Some Things Better

Too often the question of AI and its use within Christian higher education is absolutized. One is either “all in,” and often considered an uncritical thinker or unprincipled educator as a result, or they are completely opposed to AI, and labeled a luddite. In reality, there are many iterations in between these two ends of the spectrum. If one can concede that there are some things that AI can do better, but also some things that it should never be employed to do out of principle, then it stands to reason that it is worth exploring how these things align along this spectrum, and it may be profitable to begin to experiment with some potentially acceptable uses.[21]

Thus, the application for the professor of humanities may be to identify what things he or she may be able to do more quickly or with higher quality if an AI tool is used.[22] This need not extend into matters of pedagogy, learning, writing, or critical thinking, and may give the hesitant adopter an entry point from which to form a more informed and direct opinion on the strengths and deficiencies of AI. One of my former professors would often say that people tend to break down into two groups—those who are “lumpers” and those who are “splitters.”[23] Perhaps when it comes to AI, we should err on the side of the splitters, taking a more segmented approach to the issue. Instead of framing one’s position as simply “for” or “against” AI in the classroom, a more nuanced and granular articulation of one’s positions may be more profitable.

As for examples of lower risk and less controversial tasks that AI might be able to perform “better” than a human (or a human without an AI tool), some examples might be summarizing emails, scheduling meetings, generating an image for a lecture, developing a rubric, summarizing feedback on an assignment, abstracting an article, proofing an essay, creating an index, drafting interview questions for a faculty hire, etc. It is often touted that AI is especially good at work that involves or requires processing large amounts of data, identifying patterns and exceptions, brainstorming and ideation, “thinking” outside of the box (i.e. presenting options that are uninhibited by norms humans tend not to transgress), creating multiple varieties of an exemplar, repetitive action, summarizing, and extreme efficiency (i.e. returning options very quickly). Some of these “skills” are evident in the sample tasks above. One may find further potential uses of AI by thinking about what aspects of their work involve these strengths of this new technology.

Human Agency Can and Must Remain Central

In any appropriate use of AI, agency must remain with the human. AI should never be allowed to elevate beyond the status of a tool. It has neither personhood nor will. This matters for a few reasons. First, we must avoid unhealthy relationships with AI. In fact, no AI bot is capable of relating to a human. They are simply following the coding, configuration, and training of their engineers. Thus, any modal engagement with AI (such as speaking and dialoguing) that has the appearance of relating must always be understood as appearance only and not reality. The human in the equation must remind themselves that they are not actually relating through conversation but merely adopting a more expedient and effective way of obtaining a desired outcome than text prompts and database recall.[24]

Second, this principle retains accountability for the acting human. The Chicago Manual of Style has provided evolving guidance to credit AI text within the body of your writing but not in your bibliography.[25] This distinction derives from the position of the Committee on Publication Ethics, which states, “The use of artificial intelligence (AI) tools such as ChatGPT or Large Language Models in research publications is expanding rapidly. COPE joins organizations, such as WAME and the JAMA Network among others, to state that AI tools cannot be listed as an author of a paper.”[26] This statement reminds us that there is always a human in the loop at some point. We must always be able to identify where the human is in the process, and ensure that the accountability, the agency, the will, the role of first actor resides there.

Lastly, and flowing from the first two justifications for emphasizing human agency above, when we or our students engage with AI, if we remember this principle, we can avoid the cheap and sloppy uses of AI that are so feared among naysayers. AI remains merely a tool in the hands of the human. It may have more processing power; it may be able to accomplish a task far more quickly; it may be able to generate more varied options. However, the decision of which option is preferred and whether the exceptional processing delivered the desired outcome is and will remain the stewardship of the human in the loop. Similarly, the impetus for the processing and the generation of the options is human. The human chose to engage AI; the human engineered the prompt; the human iterated past the initial prompt and response; the human took the final option and developed it further without AI. Keeping the human agency central and helping our students see where they fit into the process will allow us to develop assignments that better balance the values we want to uphold, better develop the skills we desire as a result of our classes, and help our students resist cheap and immature uses of AI.

AI is But One of the Forces Acting on Higher Education

The temptation may be to look to AI and use it as a scapegoat for all of the modern deficiencies of higher education, especially those that have lowered an idealized standard held by those of us who are academic purists. Some of us want to blame AI for undermining true learning, diminishing writing skills, misleading students with erroneous hallucinated information, eroding character by making cheating too accessible, and on and on.

In reality, however, cheating is a business, whether through study aid providers (SAPs) and paper mills in the past or, more recently, through tools to help students avoid AI detection.[27] Non-residential, asynchronous, online education propagated an unprecedented rise in self-reported cases of cheating that rival any increase seen since November 2022.[28] Similarly, the internet did more to diminish the quality of information than anything in history, alongside making information more accessible than ever before.[29] Moreover still, the growing normalization of a 280-character culture where the phone is the constant companion and texting is the primary medium of communication from youth has done little to aid our mental health or advance our cognitive and social IQs.[30] AI may put the final nail in the coffin, but it did not put the body in the casket.

To be clear, none of these are positive arguments to use AI. However, they are arguments against the demonizing of AI that often takes place. They are also warrant for us to consider more holistic solutions to mitigate our problems than simple prohibitions of AI in the classroom. As we address the proper balance of AI in the humanities, we must do so in a visionary context that accounts for the increasing pressure of the market on higher education, the real fiscal challenges facing almost all institutions, the drop in public trust, the intrusion of polarizing politics into the sacred intellectual space, competing non-accredited options for skill development, the growing intolerance of students for long degree programs, the demand for online education, the exponential rising cost of essential technology for a digital campus, the expectation of customer service and resultant grade inflation, the ever expanding scope of auxiliaries expected of a “university experience,” and so on and so forth. AI is but one of these challenges. It must not be neglected as a challenge, but the solution is not exclusion. AI will be a part of higher education. Our task is to identify its appropriate role alongside these other phenomena.

Think in Terms of a Paradigm Shift Rather than Tweaks

In 1962, Thomas Kuhn published in book form The Structure of Scientific Revolutions.[31] In this influential work, he argued that scientific progress is less incremental and more punctuated. Thus, the field advances both by “normal science” on the one hand conducted within an established paradigm of shared assumptions, standards, and methods and also by “extraordinary science” on the other hand, which leads to an establishment of a completely new paradigm. Subsequent paradigms are incommensurable, in that they are so fundamentally different in their assumptions, norms, and methods that they cannot be directly compared with one another or translated one to the other.

Kuhn’s model may serve us well as we think about AI. Perhaps we should consider what we are undergoing as at least a technological paradigm shift.[32] How we think about writing will likely change drastically over the next several years.[33] How we think about plagiarism will likely have some significant realignment as a result.[34] The jobs that are impacted, how they are impacted, and the degree to which they are impacted are all still in flux. Even what skills are most valued is being reshaped.[35]

All of these point to a significant paradigm shift in process. As a result, it seems to me unwarranted to hold to an absolute and unchanging position on AI, or any downstream questions, based on the current status quo.[36] Certainly, we need working models, policies, and best practices as we endure the re-normalization process, but we must maintain a flexible and adaptable posture, adjusting our positions as the status quo changes.[37] We must always maintain a distinction between foundational outcomes of higher education (i.e. skills, values, and competencies) and the methods used to teach and instill these in our students—the former do not change, but the latter may evolve with each new paradigm.

We must also avoid, at all costs, evaluating new possibilities and possible norms based on old standards.[38] A wise teacher once said that “no one puts new wine into old wineskins.”[39] It is so tempting to make dogmatic statements about the apparent inappropriate use of AI because we are unable to see another option. However, for those who will know only the new paradigm, they will question how we ever did otherwise.[40]

On a related note, there is a current temptation to elevate some determinations about AI to the level of values. For example, we cannot employ AI in writing because we value intellectual property and compositional skills, and AI undercuts both values. However, once a new paradigm is normalized, one may look back and realize that this was not actually a values-based decision. In fact, the future users of AI in such a context may share the exact same values but not see AI as undermining the value in the slightest. For example, many of us have learned to value and retained the value of intellectual property and writing skills in the presence of thesauri, spell check, grammar check, word processing (easy deletion), ubiquitous access to our sources (as opposed to mnemonic access), etc. even though each of these could undermine one or both of those values in some way. In a similar way, the next generation may retain these values in the presence of AI. They might even learn how to utilize AI to express their own voice and ideas in ways that we cannot yet conceive. The application of plagiarism may even continue to be refined in light of a new paradigm just as it has throughout history, even while the principle of assigning credit for ideas where they belong would persist.[41] Therefore, in hindsight what looked to be a values-based position was in fact just another methodological or even pragmatic one. May we have the foresight and humility to avoid dogmatism that is just pragmatism unrecognized!

Our Students Rely on Us for Ethical and Skill Formation

Lastly, one cannot lose sight of arguably the most important aspect of Christ-centered education—the formation of our students. Our classes should contribute to their process of becoming fully devoted followers of Jesus. This includes their spiritual formation, intellectual formation, professional formation, and skillset formation.[42]

AI has touchpoints with each of these. Students should complete our degree programs with a mature understanding of what it means to be human; what qualities warrant personhood; what activity is distinctively human; how humans as image-bearers may and may not—should and should not—steward creation on behalf of our Maker; how AI may be pushing the boundaries on historic assumptions about spiritual disciplines, gospel proclamation, and worship; and how AI might actually aid their Christian journey.

They need to be able to think carefully through any new problem, separating proverbial apples from oranges. Each of our courses is the practice field for teaching students to think well and articulate thought precisely, using our discipline as the context, but not limiting the skill to that application. This includes how AI relates to our disciplines. AI will not be the ethical issue of their lifetime, but it could be their training ground.[43] If they instead receive prohibitions, then we are leaving them without the opportunity to develop these critical thinking skills, and perhaps even forming in them counter-Christian values unwittingly.[44]

Lastly, AI will impact every profession, trade, and ministry. Students in Christ-centered institutions need to know not only how to intellectually and spiritually navigate the maze of their specific professions, but they also need to know how to leverage AI in appropriate ways for their own advancement, success, and engagement.[45]

Our classrooms are the space, the lab, to work through these issues within the contexts of our specific areas of expertise, whether writing and composition, theology, biblical studies, history, philosophy, fine arts, etc. They need the space to experiment, ask any question, question the ethics of any use, try and fail, and gain first-hand experience. If we deny this opportunity to them, they may be forced to figure these things out when the stakes are much higher.[46]

One final matter must be said under this foundation principle. Christians did not steward the historic moments of the internet and social media well.[47] Many of us either uncritically adopted or naively prohibited these technologies. We must find another path with AI.[48]

Summary

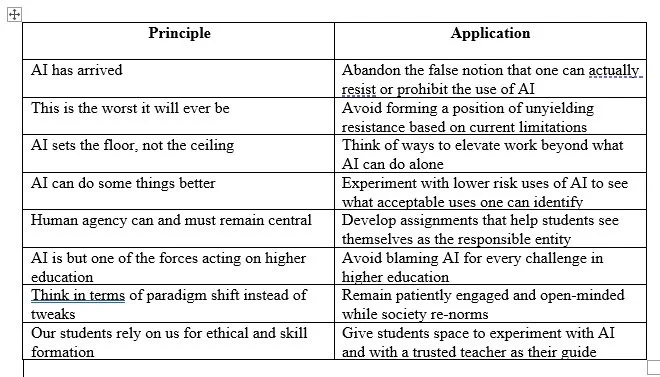

Together, these eight principles form a compelling case to engage with AI, but not uncritically or overly optimistically.[49] Each of the principles either combats a common objection (e.g. AI is a fad; AI hallucinates too much to be useful) or qualifies a misconception about those who engage AI (e.g. AI users undermine their own development). In sum, these principles defend a posture that has thoughtfully decided, not due to social influence or marketing, to engage with AI regularly in an academic context (e.g. for teaching, learning, reading, research, writing, and administrative work); to stay moderately up to date on new developments and to have preferred tools with which they are most familiar; to not become overly dependent upon AI, oversell what it can do, or expect more from AI than it can deliver; to cautiously explore and experiment with new possible uses for AI; to always prioritize, value, and highlight the responsible and accountable human behind the work accomplished with the help of AI; to not demonize AI or throw the proverbial baby out with the bathwater when challenges, dangers, or misuses become known; to stay patient and comfortable in our communal and personal learning curves on this subject, guarding speech and perspective from the extremes of both ends of the spectrum; and to equip students with information, skill, and wisdom as best as one can. Below is a table that helps tie each of these positions to the posture above.

Table 1: The Application of AI Principles

Practical Guidance for Using AI

In addition to these more conceptual foundational principles (i.e. the “why”), there are some general guidelines that may help one use AI more proficiently and adapt to it as a tool more quickly. One might consider these the “how” of using AI in very broad terms. Each is addressed in turn below.

Move from Transaction to Dialogue[50]

Engagement with computers, the web, and software has historically been very transactional. We input keywords and parameters, and we expect returns based on the relationship of our inputs to the data in the database or network (e.g. keyword searches on our library systems, web searches). Each query or engagement stands alone. We may build on them, but they are each an isolated transaction. Precise and explicit coding is also required to function. Every application is designed to accomplish a finite number of tasks. Even if a very large number of functions, the application is limited to what actions or functions the developer had envisioned and coded the technology to accomplish. Any possible needs or outcomes requires anticipation of the use and hardcoding or construction during the design and development of the product. However, with AI bots, one can have an extended engagement and iterate toward a solution (dialogue?); one may backtrack to a previous stage in the engagement and start down a new line of reasoning (branching). Every possible return does not have to be coded; the experience is more open-ended.

For example, when prompting an AI chatbot, the end user would do well to prompt only the first task to complete instead of the whole series of actions it would like accomplished. Once this is complete, the user can prompt the tool for the next step. This process can proceed throughout the entire project. If the results on a given step are unacceptable or not what was expected, he or she may back up, provide a prompt that is clearer to get slightly different results. The process is not hard coded from front to back, but it can be adapted at each interval. Similarly, one may provide specific contextual variables to the AI bot in order to shade or influence the results.[51] One of the most powerful aspects of AI is its seemingly conversational nature. Therefore, engaging with it in common language, with various contextual parameters, and in an iterative fashion may yield results that are more customized and valuable than what a non-AI tool may deliver.[52] In short, envisioning the process as more dialogical than transactional may allow one to maximize the distinctive power of the AI tools.

A brief comment on the word “dialogical” is in order. Some may rebuff at the thought of employing this word as a descriptor of our engagement with AI.[53] The personification of the machine is at the very least off-putting and perhaps even indicative of a skewed doctrine of embodiment, the imago dei, etc. I share these concerns, and I strongly encourage all to realize that if this word is employed, it is merely a descriptor of the iterative and progressive process of prompting. It in no way ascribes personhood or any other distinctively human quality to AI.[54] Such terminology should be employed with a critically healthy mindset on the part of the human, who understands that no real dialogue is taking place. Conversation is absent, and the machine is not conversational, regardless of how it seems.

One may argue that the use of such descriptors and the seeming conversational experience may dull our senses, and eventually we will be far more comfortable engaging with machines as if they were persons. This is a valid critique as well. We use the word here only as a means of expediency and for communicative power. It is far quicker and equally meaningful simply to say dialogue rather than to describe literally what is occurring. I believe that the use of such terminology is acceptable so long as one understands that they are merely mimicking real dialogue in a simulated context.

Move from Monolithic Methods to Iterative Methods

For some, there seem to be several related misunderstandings around AI. It is assumed that students use AI in a single-step fashion.[55] Often those who think this are rightfully concerned about the impact of AI on learning. It is interesting that many of these same individuals will attempt to use AI in the same way. However, the results that they receive are less impressive and are used to reinforce the resistant posture toward AI. The paradoxical holding of both these views simultaneously has been noted above. Here, I simply want to give a brief corrective on the method in general.

AI does not meet or exceed expectations when used in this single-step or monolithic fashion. Instead, one should be prepared to iterate toward the desired outcome, and this may be done in several ways.[56] First, the user may from the outset break their desired outcome into a series of steps or phases. They may then engage with AI for each of these steps. In this way, the demands of the AI have strategically been kept as simple and focused as possible, increasing the likelihood of receiving what the user wants in the end.

Alternatively, the end user may prompt the AI more comprehensively to establish a starting point. From the results of this initial prompt, the user can then begin to work with the AI to shape, fashion, correct, and refine the result until the desired end is achieved. Both these methods involve iteration and do not possess the mistaken assumption that AI will deliver a perfect and complete result on the first try. However, they choose to go after the desired outcome in distinct ways—one more proactive, and the other strategically reactive.

Regardless of which method is preferred, as one engages with AI, the following strategies may help guide the process closer to success. Ask the AI what else it might need to deliver on the goal of the prompt. Provide as much context about the audience, tone, potential uses, etc. Give it some details about the voice and persona from which it should work. Offer some examples if they are readily available, but make sure it is stated explicitly how distinct from the exemplar the delivered output should be. Giving these details in iterated prompts along the way will help the end user and the AI shape the product progressively. Along the way one may find better alternatives that had not even been considered previously.

Recognize the Analogues

In an effort to identify acceptable uses of AI, I have found it helpful to think about certain parallel situations that do not involve AI or in some cases even any digital tool. For example, in evaluating the use of AI for summary of certain reading assignments, it may be helpful to remind ourselves of respected periodicals that already do such things, even if often delayed by many months or even years. AI gives one the ability to abstract any article or essay that they have in digital format.

Another example might involve human engagement. In attempting to break through a bout of writer’s block or adapt a critical communique for an especially sensitive situation, one regularly engages a colleague or trusted companion to offer an opinion and possible edits. In these instances, it would be unusual to go out of one’s way to note the involvement of this third party. It is considered informal and commonplace. Using this precedent, in the AI age, one may opt to engage their preferred AI bot to offer an alternative arrangement for their prose, whether in operational correspondence or academic composition.[57]

One final analogue may be more polarizing. Many of us might engage a colleague at various points in our research and writing process. For example, a trusted mentor may encourage us to write a response to a recent publication when they know they may not have the time to ever do so. Alternatively, we may ask a colleague to read a draft and offer counterarguments or vulnerabilities before an important presentation. In these moments, we are not intending to steal intellectual property or gain credit for ourselves that belongs to another.[58] We are simply looking for a perspective outside of our own, recognizing the common human experience of having special difficulty finding the fault in one’s own point of view once they have normalized to it.

Going forward, might we be able to leverage AI at these points in the research and writing process? Is there an appropriate manner to do so and cite when the input was especially valuable or relevant to the finished product? Will it be acceptable to upload an article in our field and ask AI to identify three research questions that would advance the conversation further?[59] At the other end of the process, could one upload our own work and prompt AI to identify assumptions, presuppositions, unsupported claims, and counter-arguments that need to be addressed before publication.

Summary

There are many unknowns about the use of AI ahead. Furthermore, the volume of specific applications for the innumerable AI tools is exhausting. Thus, keeping a few simple guidelines in mind may help one navigate an otherwise bewildering maze going forward. Approaching the tools with a dialogical process, learning to iterate toward a goal, and recognizing where we might be able to replace analogue practices with an AI counterpart may help one leverage a few of their preferred tools most successfully. No one can know or use every AI product, but these guidelines should translate across all of them.

Sample Applications of AI for a Faculty Member

Having made the case that one should engage with AI and provided some broad guidelines for doing so, I now turn to some concrete and specific uses in an academic setting.[60] The use cases below have been grouped into categories to make for easier perusal. For all categories, a preferred tool has been identified. However, one should not consider this an absolute recommendation and should adjust to one’s own preferences. This is meant as an aid for the faculty member who does not know where to begin. An attempt has been made to provide some sense of justification or benefit gained by each use. This is not exhaustive but is intended to begin to address the question of “why”—why would one even attempt to use AI in this context instead of continuing with current behavior? The possible uses below have been arranged in order of complexity and controversy.[61] Thus, those uses that are either least controversial or least complex will tend toward the top of this section, with those expected either to be more polarizing or require more experience with AI appearing toward the end of this section.[62]

Some overall qualifiers are in order at this point. First and most importantly, all use of AI should align with current law, any compliance regulations by which one is bound, and one’s institutional data policies. Thus, some proper redaction will be required to protect non-public data in some of the use cases below or enterprise tools that are compliant will be required. No personal information should be uploaded or otherwise entered into an AI tool and no products owned by another should be uploaded to an AI tool without their explicit permission. Second, some of the preferred tools below will require paid tiers to be able to leverage all their features. I have tried to keep the free versions’ functions in mind for most. Third, prompt iteration is advised in every case below to achieve the exact result. In some cases, sample prompts have been provided as a start. However, these should not be viewed as pristine. Fourth, one’s specific needs and institutional processes will require many of the samples to be adjusted accordingly. Lastly, one must confirm and monitor whether the data entered is used to further train the AI used. If so, some of the uses below are inadvisable.[63]

Teaching Effectiveness

Preferred Tools

● Perplexity

● ChatGPT

● Claude

Qualifiers

● Prompt iteration will be required to achieve the exact desired result.

● Your specific needs and institutional policies/processes will require the samples below to be adjusted accordingly.

Sample Use Cases[64]

● Upload scored but anonymized exams over a long span of teaching and prompt AI to identify areas that are potentially confusing to students based on the patterns of performance.

● Upload course evaluations, pre/post-tests, rubrics, or program assessments over a long span of teaching and prompt AI to identify my own biases and areas I may be neglecting.

● Upload course evaluations, pre/post-tests, rubrics, scored exams, and a syllabus and ask AI what the most effective learning activities are in my course.

● Upload discussion board responses or review notes (for a reading seminar) from assigned reading and prompt AI to identify areas where students are still confused or missing ideas from the reading.

Benefits and Justification

● Even if imperfect, these outputs may prompt or stimulate ideas for you that lead to improved teaching effectiveness.

● AI is skilled to see patterns we may not be able to see.

● AI may not share personal biases that we possess (it certainly has its own other biases).

● AI can process larger volumes of data than we can.

Communications

Preferred tools

● Perplexity

● ChatGPT

● Claude

Qualifiers

● These are intended to be first, rather than final, drafts.

● The professor would need to edit them manually or through iteration in the AI tool.

Sample Use Cases[65]

● Draft instructions

o Sample Prompt: I am a professor in a graduate school. One assignment for my students is to create an X. The goal of the assignment is to facilitate Y. The value of this assignment is Z. They will need to submit the finished product and supporting materials in Canvas. They have four weeks to complete the assignment, and it is worth 100 points. Please draft an assignment sheet that succinctly communicates the goal and value of the assignment, the assignment details, and detailed step by step instructions as a guide for the students. Give me two versions of this to consider.

● Draft Syllabus

o Sample Prompt: Attached is a sample syllabus. I value the categories and content represented in it. However, I would like to consider ways to organize it differently and make it more concise. Please provide me with five different versions that do this.

o Sample Prompt: Attached is a sample syllabus. Use it to learn about my class. Please provide me with two versions that are a complete reenvisioning of the syllabus.

o Sample Prompt: Attached is a sample syllabus. I am teaching a new class on X. Please draft a syllabus for this new class using the format in the attached syllabus. The assignments and textbooks for the new class are as follows: (provide text).

● Draft an announcement

o Sample Prompt: I want to encourage the students to finish the last half of the semester well. Please draft an announcement that is motivating, builds confidence in their ability, persuades them that I am here to support them and their education, and reminds them (in list format with associated due date and point value) of all remaining assignments. Conclude the announcement with the quote from X.

● Forecast areas of potential confusion for students

o Sample Prompt: What aspects of the attached announcement (or instructions, syllabus, etc.) are most likely to create anxiety or confusion for students. Please provide an alternative that has a caring tone but maintains the importance and seriousness of the subject-matter.

● Edit existing communications and projects for tone and quality

o Sample Prompt: revise this email to communicate a more sincere and caring posture but also serious about the topic.

o Sample Prompt: Make this announcement more enticing to students in such and such field.

o Practice a conversation with AI before you address a sensitive subject with your dean or engage with a difficult student. You will need to provide a personality sketch of the anticipated conversation partner as part of the prompt.

o Sample Prompt: How well are my materials tailored for the job posting X?

o Sample Prompt: How responsive will journal/conference X be to the abstracted article below (provide abstract)? What initial objections might an editor/program chair have?

o Sample Prompt: What will attendees at conference X think the following paper title is about? How could the title be revised to create more draw but remain true to the subject of the paper (provide paper and title)?

o Sample Prompt: Review these 5 successful book proposals to publisher/editor X. Identify trends and themes that might have contributed to their success. Critique my attached proposal on how to make it stronger in light of these tendencies. (acquire proposals from colleagues that have been accepted by your desired publisher/editor. Obtain their permission to upload to AI. You may also provide the web address of the publisher or editor profile to give the bot a sense of the publisher identity).

Benefits

● Editing a generated version is more efficient than generating from scratch. Your time and expertise can be invested elsewhere for more value.

● You can minimize unnecessary miscommunications that will rob more time from you or create unnecessary anxiety in your students.

● You can actually deliver on sentiments/ideas that you want to express to your students but never can quite find the time to do.

Visuals and Image Generation

Preferred tools

● Nano Banana

● Adobe Firefly

● Perplexity

● ChatGPT

Qualifiers

● These examples can be used by the professor or allowed for use by the student

Sample Use Cases

● Images for learning (e.g. presentation or handout)

o Sample Prompt: Generate an image that represents the process and challenges of learning to write well. Present this image as an impressionistic painting.

o Sample Prompt: Generate a conceptual image that will serve as a mnemonic aid for my students regarding my lecture on Genesis. Attached are my notes. My three main ideas are A, B, and C. Make the image comical.

● Visualization of Data

o Sample Prompt: attached is a comprehensive list of manuscripts, witnesses, citations/quotations, and reception of ancient texts. Create two visualizations. The first should illustrate in a comparative framework the number of witnesses for each ancient text and the respective date for each witness. The second should illustrate the frequency of citation and reception for an earlier work. Use the categories of biblical, apocryphal, ancient Near Eastern, and pseudepigraphic that occur in the spreadsheet to group the texts.

Benefits

● The professor can aid student recall, provocation, and engagement with the use of customized images or visuals.

● If allowed for students, they can build AI literacy and experience.

Simulation and Role Play

Preferred tools

● Perplexity

● ChatGPT

Qualifiers

● AI cannot simulate perfectly

● AI can simulate better if provided more parameters, more clarity, or artifacts that represent the position or persona it is assuming

Sample Use-cases[66]

● Simulate gospel conversations

o Sample Prompt: You are a devout Hindu. I am a Christian evangelist/missionary. I want to share the gospel of Jesus Christ with you. I will engage you in a conversation that will lead to a presentation of the gospel message and an invitation to live by these beliefs. Make friendly conversation with me until you hear the gospel presentation. Shift your tone to one of skepticism and present objections to accepting and adopting my belief system. You may object on any grounds, whether logical, historical, hypocritical, or personal, but do not be limited by the examples I have provided here. As I overcome your objections, I become slowly but progressively more willing to consider my gospel message. I would like to go between 5-10 rounds before you accept the gospel. Your objection may stay within the same vein or shift as needed. Use the attached document as an understanding of how I understand the gospel. Use the attached document to gain an understanding of Hinduism. You may go beyond this document to form your understanding of your position. When I prompt you with “how could I have addressed your objections differently,” reveal additional strategies I could have taken to appeal to you in other ways.

▪ The student may mix categories (Brazilian Roman Catholic with strong animistic beliefs) or provide non-categories (e.g. non-religious materialist, raised in a Christian context but now lonely and wounded, nominally Christian)

▪ If the AI is not consistent with the requested position, this is not a weakness but a strength in that few people are completely consistent within their belief systems

▪ Of course, any of the parameters may be adjusted.

▪ The more you provide the AI with the specific beliefs, the better it will be able to align with your assumed categories and labels

▪ The dialogue may be exported and uploaded for grading

▪ The value of this is that you can mix categories and create engagement with personas that aren’t regularly available to your student in person or even easily identified.

● Simulate clinical sessions for counselling

● Simulate apologetic and/or ethical conversations

● Simulate a debate between two positions

o Sample Prompt: Assume an academic knowledge of an undergraduate/graduate student. I want to debate X topic with you. I will take the position of A. In a friendly/semi-hostile tone, challenge my position with alternative data, evidence, arguments, and perspectives. Do not support my side, but only yours. Carry the debate on for at least 5 rounds. Make your responses of comparable length to mine. When I tell you that the debate is over, reveal additional arguments, evidence, or sources that I could have presented to strengthen my position in the debate.

▪ The student may adjust the level of competition they want from the AI.

▪ The student can adjust the tone they want from the AI (friendly, semi-hostile, etc.)

▪ More context, specificity, or parameters will improve and customize the experience.

▪ The student can specify the debate topic or can provide a list of topics and let the AI choose in order to practice mastery for a broader body of knowledge, perhaps in preparation for a class debate or exam.

▪ The dialogue may be exported and uploaded as an assignment.

▪ The debate may stand alone as a graded assignment or learning opportunity.

▪ The debate may be a follow up or “application” assignment to research/position paper assignment to assess how well have they understood the complexities of the issue, mastered their position, and anticipated the counter position in their research.

▪ The debate may be used as part of the preparation and writing of a research/position paper to help expose where they may still have weakness and vulnerability in their mastery of the research question.

● Simulate the position of historic people on specific issues

o Sample Prompt: How would Augustine, Thomas Acquinas, and Friedrich Nietzsche distinctively approach the question of AI? Attached is a sample writing from each to help inform your response.

● Simulate an interview with a historic (or biblical) persona

● Preparation for thesis or dissertation defense

o Sample Prompt: Attached is a sample chapter from my master’s thesis. Assume the role of an examining reader with high academic standards. Ask me questions that will force me to defend my ideas. Ask follow-up questions from my responses. Make the questions progressively sharper and more difficult. Focus on assumptions/presuppositions, weaknesses of argument, lack of evidence, unsupported claims, and overlooked counterclaims in your questions.

● Preparation for comprehensive or summative exam

o Sample Prompt: The following bibliography represents a body of material over which I will be comprehensively tested. Assume the role of an academically principled seminary/university/grad school professor. Ask me doctoral/PhD level questions about these sources or the concept and arguments made within them. Feel free to ask follow-up questions in response to my answers in order to find my level of mastery.

▪ May use aural or text mode

▪ May work from bibliography or specific works

● Preparation for work, church, or academic placement/program interview

o Sample Prompt: Assume the role of an academic search committee at university X. Review their institutional culture, academic programming, and job posting here (insert links or attach materials). Attached are my application materials. Ask me interview questions that would be likely to come up. Focus on my fit and qualifications.

Benefits

● The student may practice before a live experience.

● The student may gain exposure to things to which they do not have access in the “real world.”

● The student may be provoked to consider the relationship between various perspectives.

● The student may test their mastery of a given body of knowledge.

Tutoring

Preferred tools

● NotebookLM

● Perplexity

● ChatGPT

● Claude

Qualifier

● A custom chatbot could aid and expedite this for students significantly as well as allow the professor to put permanent parameters, a core knowledge base, tone, limitations, expectations, and assumptions in place.

Sample Use-cases[67]

● Allow students to use AI to quiz over assigned reading in order to gain comprehension.

● Allow students to use AI to explain complicated concepts.

● Allow students to use AI to create sample practice problems.

● Allow students to ask AI for concrete examples of an abstract concept the student is struggling to connect to reality.

● Allow students to explain a concept from a less familiar area in terms of an area in which they are more familiar.

● Allow the students to ask for exam feedback on essays or short answer questions on which they scored low in preparation for final exam.

● Allow students to ask AI for the relevance of a given class or concept (the why!).

● Allow students to create study cards from class notes.

● Allow students to prompt AI for help improving their writing, expression of ideas and opinions, or sermons before the final submission or between a first draft and final submission. AI can be restricted from doing the work for them but prompting the student with questions, etc.

● Allow students to engage with AI on a comprehensive capstone project to ensure all assumptions, strategic planning, and execution are in line.

Benefits

● Repetitions of content are increased.

● Connection with the student starting place is enhanced allowing for greater growth/movement.

● Students can leverage their current skills and knowledge to increase in an area that is more difficult for them.

● Reduction of questions and needs that may otherwise rise to the professor.

● Access for the student to resources when the professor is not available.

● If a custom chat bot is used, the professor can have greater influence over the information that is shaping the student’s formation than is the case currently, given the non-curated information available on the internet.

Assessment

Preferred tools

● Perplexity

● ChatGPT

Qualifiers

● This addresses assessment design in concept. Canvas may limit or enhance the delivery of this to students.

● Keep custom exams within requirements for degree programs, non-discrimination policies, etc.

Sample Use-cases[68]

● Generate an exam from your materials and reading assignments

● Generate gradated exams for academic level and/or course background

● Generate diversity of exams to mitigate cheating

● Generate more low-weight exams or quizzes to mitigate cheating

Benefits

● Custom exams adapted for academic level and experience increases learning.

● More exams with lower impact per exam decrease the temptation for cheating.

● More diverse/customized exams minimize the opportunity for cheating.

Reading

Preferred tools:

● NotebookLM

● ChatPDF

● Hamata

● Perplexity

● ChatGPT

Sample Use-cases

● Allow students to generate reading summaries/abstracts

● Allow student to query assigned reading in AI

● Allow students to compare their own assessment of assigned reading with AI’s evaluation

● Allow students to ask for help from AI with complex reading (see also the “tutoring” section above)

Benefits

● Increases distinctive reps for engagement with material supporting memory and comprehension.

● Students can leave with artifacts for later reference.

● Allows for a conversation with reading to develop, which naturally supports the research and learning process.

Writing

Preferred tools

● NotebookLM

● LitMaps

● ChatGPT Canvas

● Perplexity

Qualifiers

● This method converts the classroom to the “practice field” instead of the “game field.”

● This method could be optimized with a custom chatbot.

Sample Use-cases[69]

● Moving from “0” to a narrowed topic to a research question.

● Generating a bibliography (or LitMap)

● Strengthening the argument of a paper

● Transition drafting practice

● Audience tailoring practice

Benefits

● The student is able to be “walked” through the process and coached along the way.

Grading

Preferred tools

● Perplexity

● ChatGPT

Qualifiers

● Full disclosure to students is expected.

● Some appropriate allowance for student use of AI is appropriate in response.

● A pathway for grade contestation is essential.

● Clear and robust training/calibration of the AI is required for serious outcomes.

● Testing and spot checking is advised for each grading session.

● Refining for unexpected scenarios may be needed.

Sample Use-cases[70]

● Grade research papers

o Sample Prompt: Grade the attached paper within the following framework. Attached is a rubric with possible scores for ten outcomes/area. Assess the paper for each outcome and assign a score. Sum the scores for a total score on the paper up to but not exceeding 100. Provide a completed rubric with outcome scores and the total score. Provide commentary/mark up for the paper with associated page numbers and text relevant to each comment. Attached are examples of a perfect paper, a B paper, a C paper, a D paper, and an F paper. Assess style with the Chicago Manual of Style, 18th edition.

● Grade essay exams

o Sample Prompt: Similar to the prompt for papers above, but adapted for essay and short answer questions. Provide an ideal essay, very clear parameters, and well-defined instructions for its “decision-making pathways.”

Benefits

● More objective and consistent feedback and scoring.

● Quicker turnaround on feedback and scores.

● Higher ordered learning unlocked for assessment design.

Enterprise AI Tools for Academic Use

All the use cases above employ personal grade products and the vast majority of them are chatbots. However, the race to develop AI functions in long-standing academic technology is fast and furious. Thus, nearly every category of academic software, where student information systems, learning management systems, academic operations systems, library systems, customer relation management systems, video-conferencing tools, enterprise resource and personnel systems, etc., have rapidly been developing AI functionality. Therefore, the faculty touchpoints with AI are likely already in place and increasing. Below is a very abbreviated listing of some of the most common institutional systems and how AI might already be in production at one’s school. If not already, it will certainly be the case soon, unless your system administrators or institutional administrators have opted to keep these functions out of production (assuming the developers have allowed for this option).

Learning Management Systems

● Example Products: Canvas, Blackboard, Brightspace

● Possible AI Uses: grading, communications, exam generation and customization

Student Information Systems (and ERPs)

● Example Products: Colleague, Banner, Workday

● Possible AI Uses: advising, student analytics, communications, retention and marketing, degree planning and continuing education

Library Tools

● Example Products: Ebsco

● Possible AI Uses: natural language searches, lit-mapping, file summary

Conferencing Tools

● Example Products: Zoom

● Possible AI Uses: meeting summaries and minutes, class notes generation, follow-up action items, quiz generation from content

Potential Benefits of Engaging AI for Pedagogy

In summary and speaking very broadly, the use of AI at the personal or institutional level has the potential to realize certain benefits or advantages (listed below). These are provided in order to counter the tendency of some of us to see any adoption of AI as a concessive exercise, in which the goal is to give up as little ground as possible. However, contrary to this perspective, I would like to offer the possibility that the adoption of AI may actually enhance our academic product and experience in some ways. I do not claim this as a fact, but I offer it as a possibility upon which we need to keep our eyes as we move forward.

Time saving advantages may materialize for the professor and student.

The quality of assignments may be enhanced.

Students may achieve deeper learning through repetition (cf. Bloom’s Taxonomy).

A greater scope of material can be exposed to students.

More objective and consistent feedback and assessment may be provided for the student.

A quicker turn-around on grading can be provided to the student.

Students may have support in off-hours and more coaching touchpoints via custom bots.

Education and assignments may be more customized and personalized.

The student may produce more artifacts to take with them for reference after the course.

There may be more opportunities for practice in a degree program before performance in a career or ministry.

Conclusion

The question is not whether AI will impact education in general and higher education specifically, but how faculty will steward this season of technological change. Some of us have opted for a more hesitant or even resistant posture, perhaps associated with the ideals of an academic purist. Some of us may even be adversarial to change brought about by AI, perhaps influenced by more fundamental and philosophical convictions. We are likely sympathetic to these convictions and have our healthy share of concerns. Some of us may be eager (even overly or uncritically eager) to get going with AI, but do not know where to begin. The steepness of the learning curve and the rate of change is admittedly intimidating, especially for those of us who do not have expertise in technical fields.

My ambition with this essay has been to hold open the space for a position of critical engagement with AI. I am not advocating for uncritical adoption. I am not arguing against the philosophical opposition (in fact I actually believe it is important and healthy for this group and their position to “have a place at the table” so to speak). I am not interested in lowering standards or undermining learning in higher education; our interests are quite to the contrary.

I believe that one may engage with AI and do so in a manner that honors Christ, our God and King; reserves ample space for disagreement, dialogue, and even spirited debate; upholds and possibly elevates the ideals and values of higher education; respects our colleagues; advances our institutional missions and supports the administrators who steward them;[71] and preserves the dignity of the one who holds this position. For those who may be looking for a way to approach AI or those who did not consider open engagement as an option for a critically minded Christian, I pray this essay has been of some help to you. For those who find themselves unsympathetic to the position advocated here, I pray some of the content here has helped you clarify and solidify your own posture.[72]

Appendix: An Abbreviated Theological Framework for Approaching AI[73]

Statement

Artificial Intelligence (AI), being properly understood as unnatural intelligence (i.e. perceived intelligence that exists outside of the natural order of humans and animals) is something neither to be revered nor something to be feared. We do not look to it for deliverance from any current condition found among humankind in the world. Neither do we spurn it as evil, intrinsically harmful, or irredeemably corrupt. We recognize that like most technologies, whether analogue or digital, if not properly restrained it will likely “speed up” up the pace of life and the overall human experience, and we mourn the depleting moments to “be still” in the modern world. We also acknowledge that as an arrival technology, there is no escaping or avoiding its usage if we wish to remain social creatures and economically connected.

Therefore, we will absolutely resist the deployment or adoption of so-called AI tools. Instead, we will welcome their use where they may potentially remove toil for students, faculty, staff, alumni, or friends. We also and even especially welcome its use in instruction where it may prove to equip current and future student-ministers for life, vocatio, and service in the AI-age. However, we recognize that some uses will conflict with what some or all may consider to be distinctively human activity or work. Therefore, critical thought, dialogue, and charity are essential in any deployment of AI for instruction or academic operations. We will also respect the freedom of conscience of members of our communities who do not share such an optimistic outlook. Allowance for a stronger abstinence from AI tools will be made so long as it does not otherwise disrupt alignment with our Christian culture or adoption of our core values, thwart or work contrary to the mission of our institutions, or prohibit one’s ability to contribute at pace to such culture and mission.

We perceive this statement on AI to be a middle ground between those who are uncritical adopters or excessive optimists on the one hand and those who absolutely resist it or who are ignorant of its already ubiquitous presence on the other. We will look to this statement, the supporting propositions, and commentary below to guide our thinking, acting, and instructing as we move forward in this new age.

Supporting Propositions

The statement above is grounded in and supported by the following propositions:

AI has arisen in God’s providential timing and operates only under his providential care.

God is immutable, and his values have not changed.

God alone remains and will remain the sole uniquely creative being.

Humans operate as God’s imagers and do well when we express creativity within our confines on the earth.

Sin has corrupted creation including our creative expressions that employ AI.

God’s rule on this earth may be increased through the use of AI tools.

Commentary

Each of these propositions is grounded in the Scriptures, historic biblical doctrine, and the creeds and confessions of the church. Below the broad doctrinal, confessional, and scriptural grounding for each of the propositions receives a brief commentary. Together, the propositions and commentary found and buttress the overarching statement above.

Divine Providence (Proposition 1)

This is what the Lord says: Heaven is my throne, and earth is my footstool. (Isaiah 66:1)[74]

A king’s heart is like channeled water in the Lord’s hand: He directs it wherever he chooses. (Proverbs 21:1)

…until you acknowledge that the Most High is ruler over human kingdoms, and he gives them to anyone he wants. (Daniel 4:32)

Consider the birds of the sky: They don’t sow or reap or gather into barns, yet your heavenly Father feeds them…. Observe how the wildflowers of the field grow: They don’t labor or spin thread. Yet I tell you that not even Solomon in all his splendor was adorned like one of these. (Matthew 6:26–29)

Aren’t two sparrows sold for a penny? Yet not one of them falls to the ground without your Father’s consent. But even the hairs of your head have all been counted. (Matthew 10:29–30)

The doctrine of divine providence is listed first here because it also proceeds first logically and confessionally in this approach to AI. It is the foundation upon which this framework is built and is assumed within all of the propositions above. If this principle doctrine is undone, the strength of the others is weakened. God’s providential care of his creation rests first in our minds as we approach the subject of AI or engage AI tools. It is essential to rehearse that this doctrine declares not only that God is capable of anything he desires, but also that he employs his wisdom and power in the service of his care for his creation. Thus, divine providence is a supreme comfort for those who serve the Lord and embrace this truth. Whether directing the will of kings, preserving the flora and fauna of the earth, or even giving insight and resources to “big tech,” the Lord is directing the paths of actors and stewarding that which is his—all of creation. He is king over all things, including big data.

Divine Immutability (Proposition 2)

Because I, the Lord, have not changed, you descendants of Jacob have not been destroyed. (Malachi 3:6)

Every good and perfect gift is from above, coming down from the Father of lights, who does not change like shifting shadows. (James 1:17)

Jesus Christ is the same yesterday, today, and forever. Don’t be led astray by various kinds of strange teachings; for it is good for the heart to be established by grace and not by food regulations, since those who observe them have not benefited. (Hebrews 13:8–9)

The God who acted in creation, who covenanted with ancient Israel, who delivered his Son for the sins of the world, who promised to bring about a new heavens and new earth is the same God who is known through the Scriptures today, who guides his church, and who we all serve through our respective vocational ministries. Therefore, the attributes and values that may be discerned of this deity through the sacred Scriptures may be assumed of God in our approach to ethical matters today, including AI. Therefore, this framework holds that God is sovereign, wise, powerful, holy, purposeful, and capable of using broken beings and tools for his purposes. These attributes are in full view and celebrated in our approach to AI. Therefore, AI will not threaten God’s character or his mission as we partner with him.

The Holiness of The Lord (Proposition 3)

For this is what the Lord says—the Creator of the heavens, the God who formed the earth and made it, the one who established it (he did not create it to be a wasteland, but formed it to be inhabited)—he says, “I am the Lord, and there is no other. (Isaiah 45:18)

And one called to another: Holy, holy, holy is the Lord of Armies; his glory fills the whole earth. (Isaiah 6:3)

He made the earth by his power, established the world by his wisdom, and spread out the heavens by his understanding. (Jeremiah 10:12)

For his invisible attributes, that is, his eternal power and divine nature, have been clearly seen since the creation of the world, being understood through what he has made. (Romans 1:20)

He is the image of the invisible God, the firstborn over all creation. For everything was created by him, in heaven and on earth, the visible and the invisible, whether thrones or dominions or rulers or authorities—all things have been created through him and for him. He is before all things, and by him all things hold together. (Colossians 1:15–17)

The Lord alone is holy. He is completely unique. There is no other like him in heaven or on earth. This is grounded in his role as creator and exhibited in his creative work over all things in our known world—both things visible and invisible. While mankind mimics their heavenly father and exhibits creative tendencies, there is none who is as creative as the Godhead. Thus, humanity may fashion with AI, but it will still pale in comparison to the creative work of the triune God. However man may marvel at AI and describe its outputs as creative, these descriptions remain heuristic only, as it executes its coding. There is only one who is truly creative, and his holiness is manifest in this exclusive quality.

Mankind as the Image of God (Proposition 4)

Then God said, “Let us make man in our image, according to our likeness. They will rule the fish of the sea, the birds of the sky, the livestock, the whole earth, and the creatures that crawl on the earth.” (Genesis 1:26)

Whoever sheds human blood, by humans his blood will be shed, for God made humans in his image. (Genesis 9:6)